Why Completion Rates Look Fine But Learning Effectiveness Still Falls Short

High completion rates feel like a win for L&D, but they often hide poor learning effectiveness. Learn why performance outcomes suffer and how to fix it for real transfer to job impact.

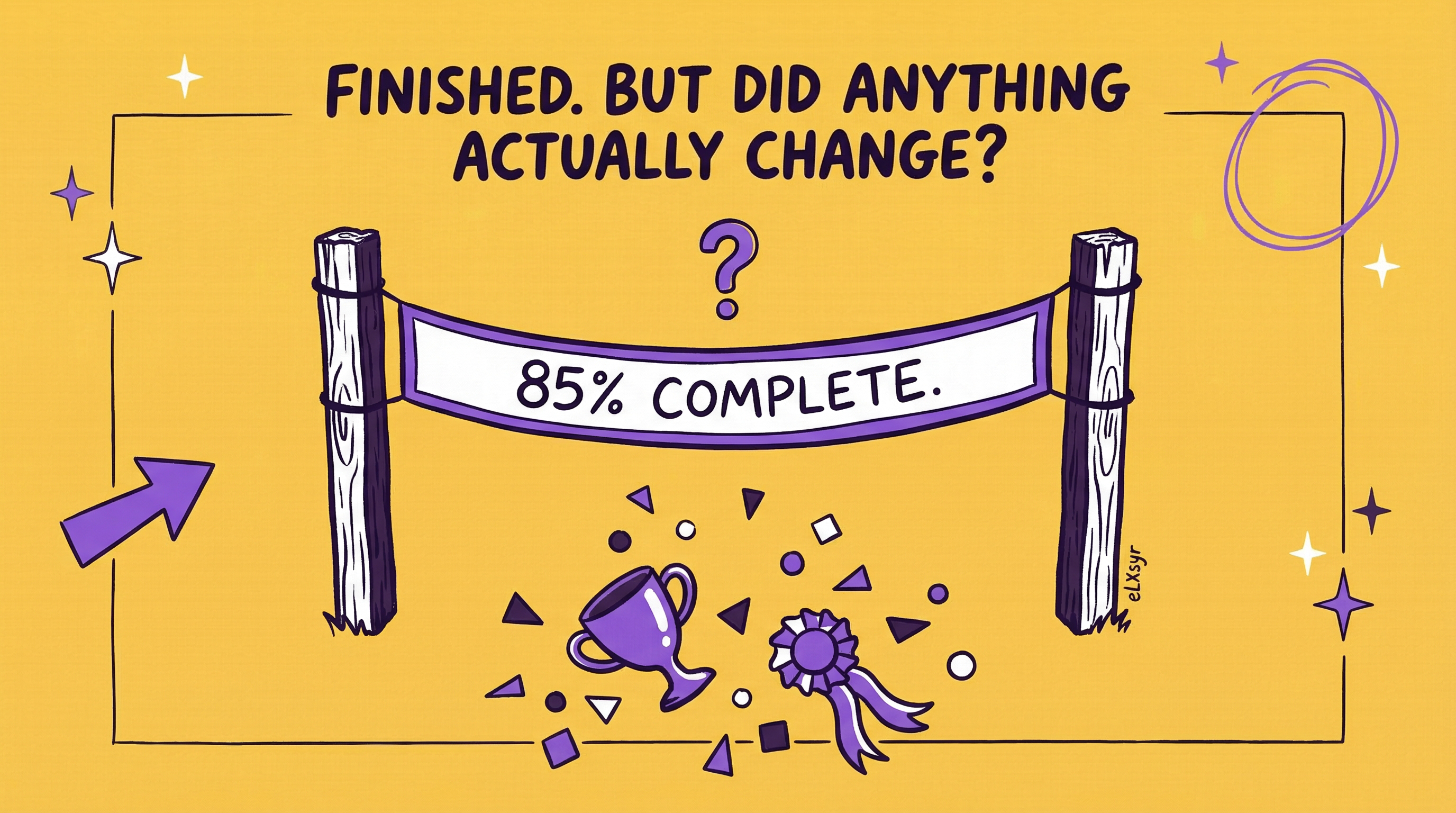

You build a course. Learners finish it. Dashboards show 85% completion. Leaders nod in approval. But sales reps still miss quotas. Customer service agents repeat the same errors. Performance outcomes stay flat.

This gap frustrates every L&D pro. Completion rates look solid, but real work shows no change. Employees check boxes without changing behaviors. That's the core problem with most training today.

It's time to rethink what success means. Focus on learning effectiveness, not just finish lines. This post shows why completions fool you and how to measure what matters.

What This Means in Real Work

Completion rates track if someone clicked 'done' on a module. They ignore if the person learned anything useful. High numbers mean activity, not results.

Take a sales training course. Ninety percent finish it. Great, right? But quotas don't budge. Reps can't apply objection-handling techniques in calls. The course covered theory but skipped practice on real scenarios. Completion hid the failure.

Or think of compliance training. Everyone completes it fast. Yet errors persist on the job. Learners skimmed videos without grasping risks. Completion rates signal engagement or ease, not skill gain or transfer to job.

Real work demands behavior change. A finished course proves nothing about that. Low performance outcomes expose the truth. Training must bridge the gap from screen to daily tasks.

Practical Steps to Boost Learning Effectiveness

Junior IDs can fix this now. Start small. Build habits that prove value. Here are four steps you can do today.

Step 1: Define job-connected goals upfront. Before storyboards, list one clear behavior change. Ask: What will learners do differently on Monday? For customer service training, target 'resolve 20% more tickets without escalation.' Tie every slide to that.

Step 2: Add practice with feedback. Skip endless slides. Build scenarios that mimic work. Use branching choices or drag-and-drop tasks. Give instant feedback: 'Good job spotting the risk. In real calls, say this instead.'

Step 3: Quiz for application, not recall. Ditch multiple-choice trivia. Ask: 'Your boss pushes back on this budget. Use two techniques from module 2.' Right answers show understanding. Wrong ones flag gaps.

Step 4: Track one post-course action. Add a final task: 'Email your manager one idea you'll try this week.' Or survey: 'Did you use this skill today? Yes/No/How.' Collect data weekly. Share wins in team meetings.

Common Mistakes That Kill Learning Effectiveness

- Mistake 1: Chasing completion numbers. You shorten courses to boost finishes. Learners skim faster but retain less. Result: Pretty dashboards, zero transfer to job. Focus on depth over speed.

- Mistake 2: No real practice. You pack in videos and PDFs. Learners watch passively. No muscle memory forms. They freeze in real situations. Always include hands-on tasks.

- Mistake 3: Ignoring the job context. Content floats in theory land. No link to daily pain points. Employees tune out. Research their workflows first. Make examples from their world.

- Mistake 4: Forgetting follow-up. Course ends, so does support. Skills fade without reinforcement. One nudge email a week keeps gains alive. Skip this, and performance outcomes revert fast.

Leader Lens: Evaluate Impact, Risk, and Adoption

Seniors and L&D heads need proof beyond activity. Measure learning effectiveness by tracking behavior change and business results. Look at error rates before and after training. Count tickets resolved without handoff. Check sales close rates for trained reps.

Spot risks in low adoption. If only 40% report using skills, rework looms. Weigh budget against projected gains. High completion with flat metrics signals poor design. Push for practice-heavy overhauls.

Adoption thrives on relevance. Survey managers on observed changes. True impact shows in sustained performance outcomes, not one-off spikes.

Freelancer Lens: Deliver Faster, Cut Rework, Charge Premium

As a freelancer, shift to performance outcomes to stand out. Clients tire of completion-focused pitches. Show how your courses drive transfer to job. Build quick prototypes with job simulations. Test with two users before full build.

This cuts rework. Clients see results previews, so fewer change requests. Deliver faster by skipping fluff. Charge more: 'My courses guarantee 20% skill application rate, or I refine free.' Premium clients pay for impact proof.

Build a case study library. One page per project: 'Boosted resolver rates by X%. Here's how.' Land bigger gigs with data, not promises.

Quick Checklist for Learning Effectiveness

- Does every module tie to a job behavior? List them.

- Are there 3+ practice activities per course?

- Do quizzes test application, not memory?

- Plan one follow-up measure post-training.

- Did you validate goals with a stakeholder?

- Track at least one performance outcome weekly.

- Shorten to essential content only.

FAQ

Why do high completion rates not mean success?

They measure activity, not skill use. Learners finish but forget or fail to apply on the job.

How do I prove transfer to job?

Observe behaviors pre/post. Survey self-reports. Track metrics like error rates or output speed.

What's one quick win for junior IDs?

Add branching scenarios. They build practice without extra tools. Boosts engagement and retention fast.